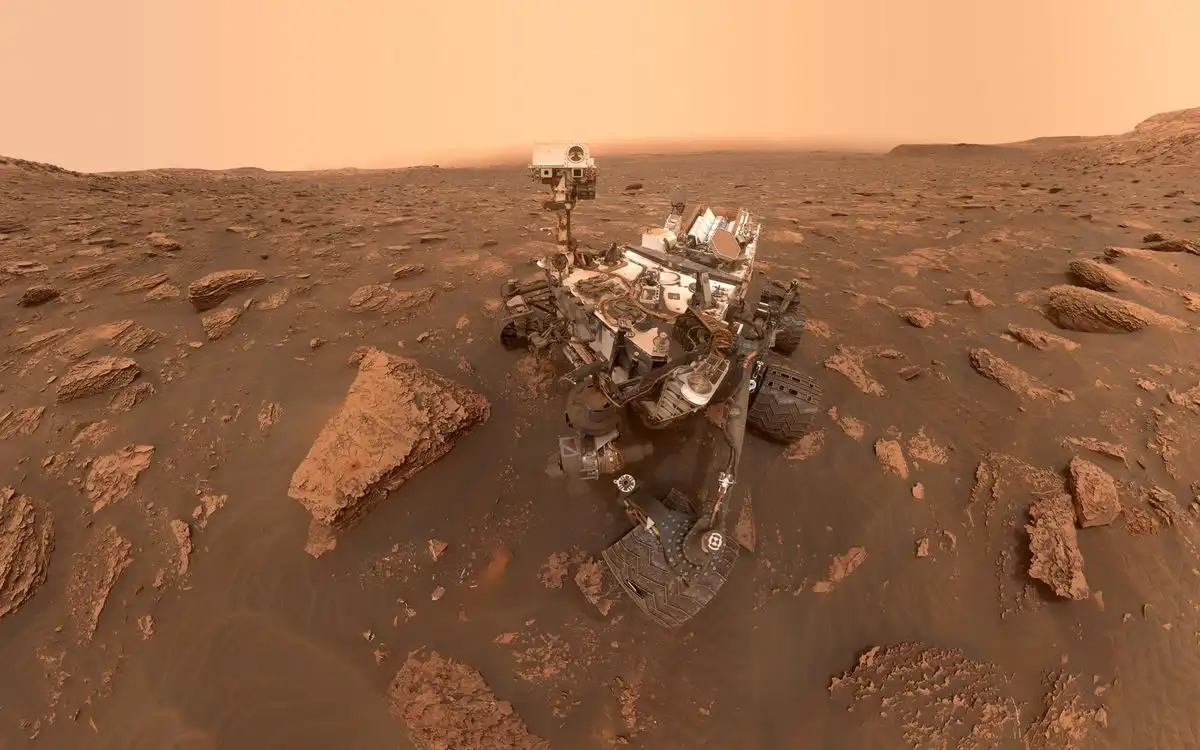

The Curiosity rover has been operating on Mars for 13 years, achieving incredible longevity due to continuous maintenance and software updates from NASA's Jet Propulsion Laboratory. Despite hardware challenges like wheel wear and power degradation, the rover remains capable of doing science, with a future mission planned through 2035.

Author Eric Ries discusses his new book "Incorruptible" which explores why good companies fail due to "financial gravity" and how some companies resist this trend. He aims to provide insights on how to prevent this from happening.

Thomas Nagel's article "What Is It Like to Be a Bat" argues that subjective experience cannot be reduced to physical explanations. He claims that understanding what it's like to be a bat requires taking up the bat's point of view.

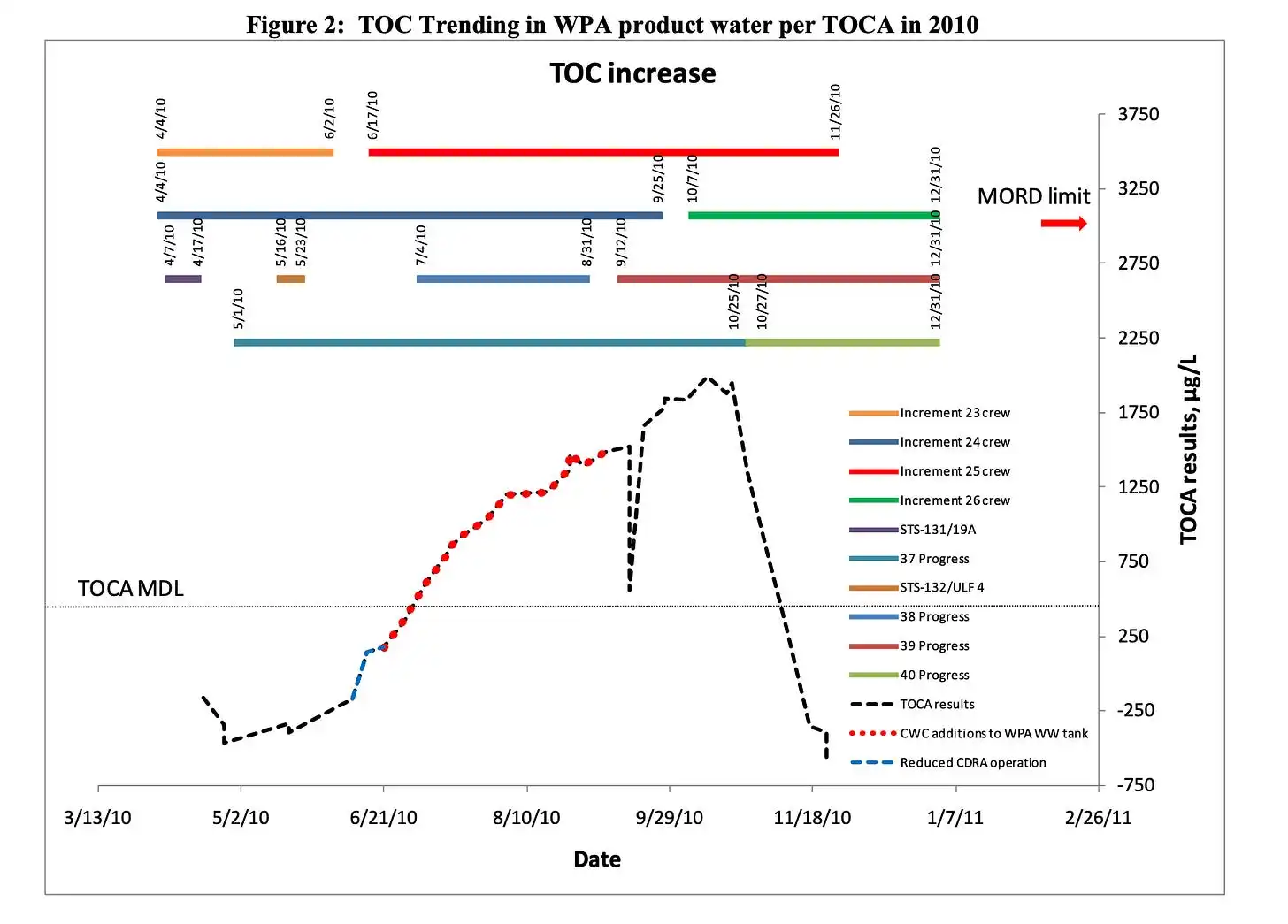

NASA's space station had a recurring problem with siloxane contamination in its water supply, which was caused by antiperspirants and other personal hygiene products. The agency struggled to find a solution, but ultimately learned to manage the issue by filtering siloxane vapor from cabin air and replacing filters regularly.

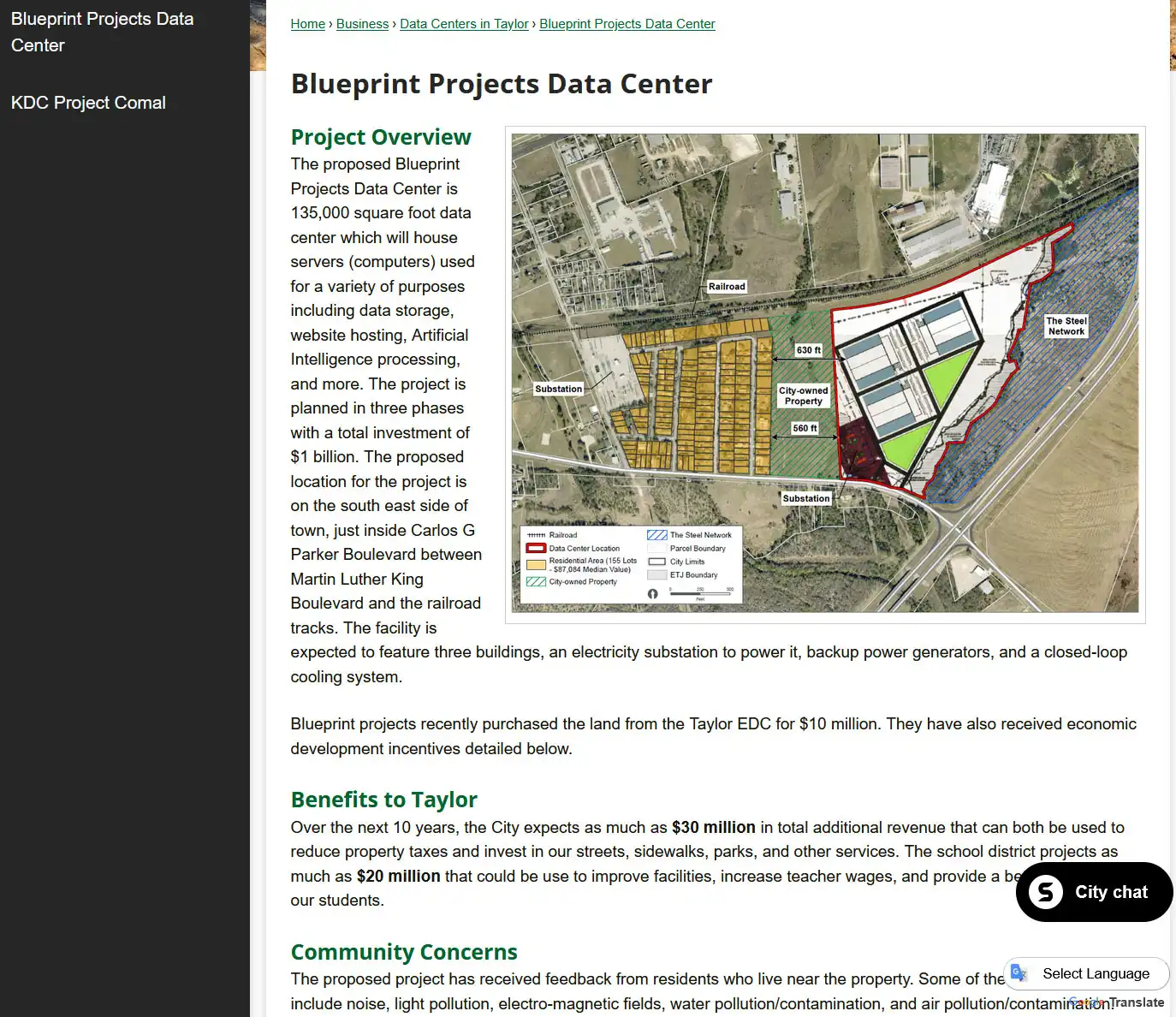

A farmer donated 87 acres of land in Taylor, Texas, to the city in 1999 for $10 with the condition it be used as a park, but it was sold to a data center developer in 2025 for $10M. Locals are fighting the development in court, citing the original deed and concerns about health risks, noise, and property values, with a decision pending in the Third Court of Appeals.

But not everyone is happy with the restrictions, and a number of cybersecurity researchers and professionals have aired complaints online. “[Fable] rejects any request that could be tangentially cyber related. Even innocuous tasks like reading a blog post,” said Valentina “Chompie” Palmiotti, a well-known security researcher who works at IBM X-Force. When a prompt triggers its guardrails, ...

A utility company's website had a big problem with a lengthy application process. The solution was to build a new version using Astro, an HTML-first approach, which doubled the company's users overnight.

With the release of Claude Fable, it is clear that Anthropic is progressing from poems to enterprise-scale narrative objects. To keep pace with competitors, the company is developing a broad portfolio of models optimized for the full literary stack.

Burr is a Python framework for building AI applications with state management and debugging tools. It provides a robust framework for designing complex behaviors and is easier to use than other platforms like LangChain.

The intersection of AI and politics is like the Hobbits and Treebeard, where AI advances at a lightning pace while policy moves slowly, creating a mismatch in timescale that is painful and requires collective action to meet the moment. To address the risks and opportunities of AI, we need to re-imagine five perennial policy areas: regulation and public safety, macroeconomics and tax policy, ...

Andrey is a software engineer at AWS who created Atlasphere.io to synchronize cloud infrastructure diagrams with actual AWS accounts. He needs feedback on the app, specifically likes and dislikes, after trying it out.

Japan's railway network expanded from a single line in 1872 to over 9,000 stations by the 21st century. The map shows stations opening over time, revealing Japan's geography through railway development.

The website is blocked due to security reasons after a suspicious action was detected. Please email the site owner with the Cloudflare Ray ID and details of the action that triggered the block.